Machine Learning

huggingface.co

huggingface.co

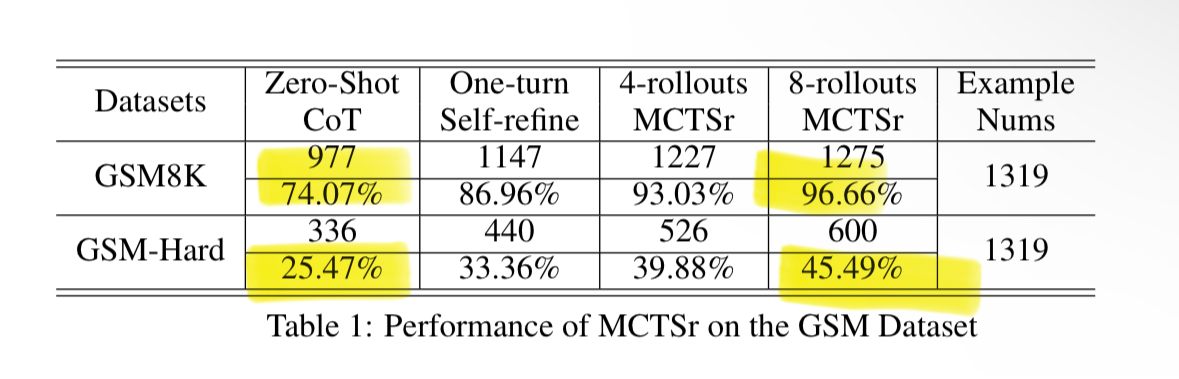

"Reflection 70B holds its own against even the top closed-source models (Claude 3.5 Sonnet, GPT-4o). It’s the top LLM in (at least) MMLU, MATH, IFEval, GSM8K. Beats GPT-4o on every benchmark tested. It clobbers Llama 3.1 405B. It’s not even close. The technique that drives Reflection 70B is simple, but very powerful. Current LLMs have a tendency to hallucinate, and can’t recognize when they do so. Reflection-Tuning enables LLMs to recognize their mistakes, and then correct them before committing to an answer. Additionally, we separate planning into a separate step, improving CoT potency and keeping the outputs simple and concise for end users. Important to note: We have checked for decontamination against all benchmarks mentioned using @lmsysorg’s LLM Decontaminator. The weights of our 70B model are available today on @huggingface here: https://huggingface.co/mattshumer/Reflection-70B @hyperbolic_labs API available later today. Next week, we will release the weights of Reflection-405B, along with a short report going into more detail on our process and findings. Most importantly, a huge shoutout to @csahil28 and @GlaiveAI. I’ve been noodling on this idea for months, and finally decided to pull the trigger a few weeks ago. I reached out to Sahil and the data was generated within hours. If you’re training models, check Glaive out. This model is quite fun to use and insanely powerful. Please check it out — with the right prompting, it’s an absolute beast for many use-cases. Demo here: https://reflection-playground-production.up.railway.app/ 405B is coming next week, and we expect it to outperform Sonnet and GPT-4o by a wide margin. But this is just the start. I have a few more tricks up my sleeve. I’ll continue to work with @csahil28 to release even better LLMs that make this one look like a toy. Stay tuned." https://x.com/mattshumer_/status/1831767014341538166

www.theatlantic.com

www.theatlantic.com

https://archive.is/SXZMe

When training a transformer on positionally encoded embeddings, should the tgt output embeddings also be positionally encoded? If so, wouldn't the predicted/decoded embeddings also be positionally encoded?

Someone (Dreamertist on reddit) got tired of depending on Huggingface for downloading models and proposes a torrent tracker to share more efficiently these huge blobs. It just started, only a few models uploaded yet, but I think it is worth that we all put our local stash online there. Making a new torrent is [super easy](https://aitracker.art/viewtopic.php?t=1) (one missing step though: when "re-downloading" the model you need to save it in the directory where it already exists. This way it will "resume" at 100% completion and switch to seeding mode)

Imagine AI giving offsprings...

Hey guys, I have been experimenting with self-supervised visual learning a bit. Until now I have only ever used U-Nets and related architectures. No matter what specific task, images or other parameters I changed I always encountered these stains on my output-images (here marked with green), although sometimes more, sometimes less. Now I wondered if anybody could tell me where they came from and how I could prevent them? In the attached picture the input (left) and target (right) are the same, so that I can be sure these stains do not come from a badly designed learning task, yet they still appear (output is the middle image). Thanks in advance and all the best :D Edit: added line breaks

Copilot sounds amazing on paper. The free (to 365 subs) version on the web is just Chat GPT4, so that's familiar enough. The integration with 365 applications is really what grabs me. Stuff like tossing it 10 spreadsheets and asking it to analyze and compare the data, having a virtual assistant to remind me of upcoming actionables, and summarizing a meeting when I zone out - it all sounds really handy. I met with Microsoft last week and they're down for giving me a 90 day trial if I want to take it for a spin. Any thoughts or suggestions? I ideally want to determine if this will improve productivity for my end users enough to be worth the insane cost of $30/user/mo.

Hi all, I think around 1 or 2 years ago, I stumbled upon a personal blog of an asian woman (I think) working at OpenAI. She had numerous extensive fascinating blog posts on a black themed blog, going into the technical details of embeddings of language models and such. I can no longer find that blog and have no other information to go by. Would anyone possibly know which blog I'm referring to? It would be very much appreciated.

2024-02-29 | [Christopher Gadzinski](https://cgad.ski/) writes: > Physics likes optimization! Subject to its boundary conditions, the time evolution of a physical system is a critical point for a quantity called an action. This point of view sets the stage for Noether's principle, a remarkable correspondence between continuous invariances of the action and conservation laws of the system. > > In machine learning, we often deal with discrete "processes" whose control parameters are chosen to minimize some quantity. For example, we can see a deep residual network as a process where the role of "time" is played by depth. We may ask: > > 1. Does Noether's theorem apply to these processes? > 2. Can we find meaningful conserved quantities? > > Our answers: "yes," and "not sure!"

pythonspeed.com

pythonspeed.com

[Itamar Turner-Trauring](https://pythonspeed.com/about/) writes: > These sort of problems are one of the many reasons you want to “pin” your application’s dependencies: make sure you only install a specific, fixed set of dependencies. Without reproducible dependencies, as soon as NumPy 2 comes out your application might break when it gets installed with new dependencies. > > The really short version is that you have two sets of dependency configurations: > > - **A direct dependency list**: A list of libraries you directly import in your code, loosely restricted. This is the list of dependencies you put in pyproject.toml or setup.py. > - **A lock file**: A list of all dependencies you rely on, direct or indirect (dependencies of dependencies), pinned to specific versions. This might be a requirements.txt, or some other file dependencies on which tool you’re using. > > [At appropriate intervals you update the lock file](https://pythonspeed.com/articles/when-update-dependencies/) based on the direct dependency list. > > I’ve written multiple articles on the topic, in case you’re not familiar with the relevant tools: > > - “[Faster Docker builds with pipenv, poetry, or pip-tools](https://pythonspeed.com/articles/pipenv-docker/)” covers using those three tools to maintain lockfiles. > - For Conda, see “[Reproducible and upgradable Conda environments with conda-lock](https://pythonspeed.com/articles/activate-conda-dockerfile/)”. Read [NumPy 2 is coming: preventing breakage, updating your code](https://pythonspeed.com/articles/numpy-2/)

cross-posted from: https://slrpnk.net/post/3892266 > **Institution:** Cambridge > **Lecturer:** Petar Velickovic > **University Course Code:** seminar > **Subject:** #math #machinelearning #neuralnetworks > **Description:** Deriving graph neural networks (GNNs) from first principles, motivating their use, and explaining how they have emerged along several related research lines.